The Microsoft AI analysis division by chance leaked dozens of terabytes of delicate data beginning in July 2020 whereas contributing open-source AI studying fashions to a public GitHub repository.

Virtually three years later, this was found by cloud safety agency Wiz whose safety researchers discovered {that a} Microsoft worker inadvertently shared the URL for a misconfigured Azure Blob storage bucket containing the leaked data.

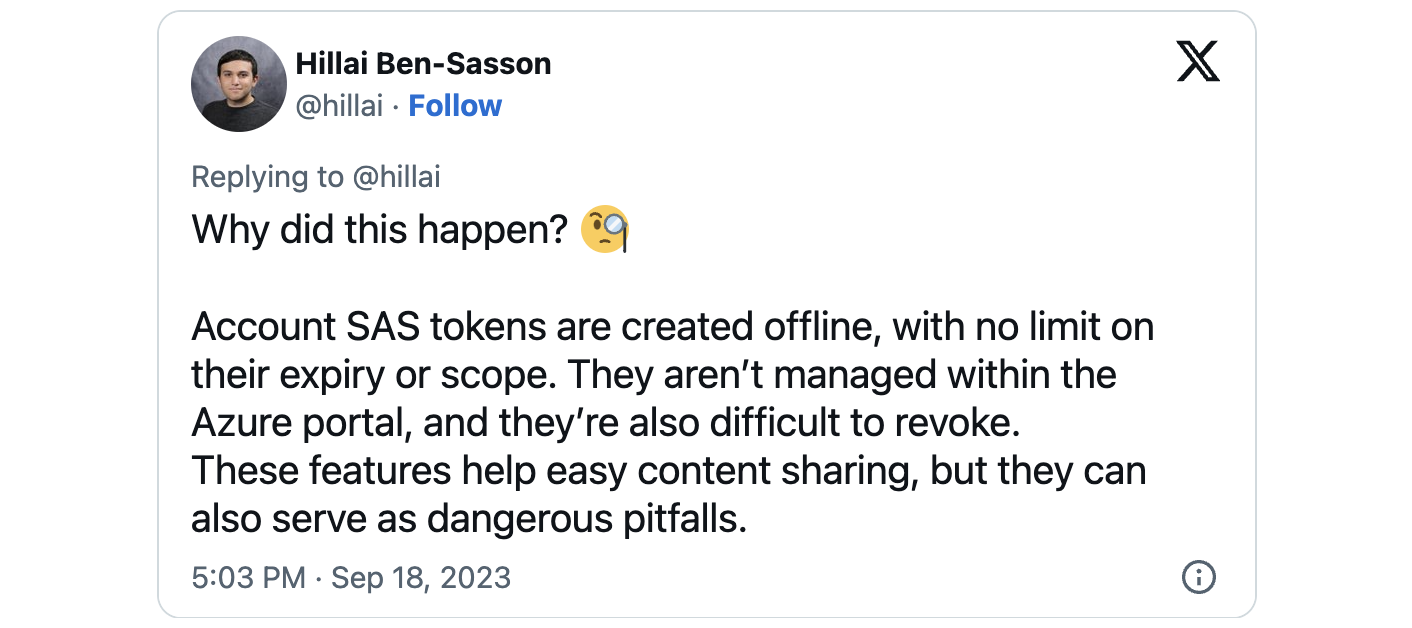

Microsoft linked the data publicity to utilizing an excessively permissive Shared Entry Signature (SAS) token, which allowed full management over the shared information. This Azure function permits data sharing in a way described by Wiz researchers as difficult to observe and revoke.

When used accurately, Shared Entry Signature (SAS) tokens provide a safe means of granting delegated entry to sources inside your storage account.

This contains exact management over the shopper’s data entry, specifying the sources they will work together with, defining their permissions regarding these sources, and figuring out the period of the SAS token’s validity.

“Because of a scarcity of monitoring and governance, SAS tokens pose a safety danger, and their utilization ought to be as restricted as doable. These tokens are very arduous to trace, as Microsoft doesn’t present a centralized technique to handle them inside the Azure portal,” Wiz warned right now.

“As well as, these tokens could be configured to final successfully without end, with no higher restrict on their expiry time. Due to this fact, utilizing Account SAS tokens for exterior sharing is unsafe and ought to be prevented.”

38TB of private data uncovered via Azure storage bucket

The Wiz Analysis Staff discovered that apart from the open-source fashions, the interior storage account additionally inadvertently allowed entry to 38TB value of further private data.

The uncovered data included backups of private data belonging to Microsoft workers, together with passwords for Microsoft companies, secret keys, and an archive of over 30,000 inner Microsoft Groups messages originating from 359 Microsoft workers.

In an advisory on Monday by the Microsoft Safety Response Middle (MSRC) group, Microsoft said that no buyer data was uncovered, and no different inner companies confronted jeopardy as a consequence of this incident.

Wiz reported the incident to MSRC on June twenty second, 2023, which revoked the SAS token to dam all exterior entry to the Azure storage account, mitigating the difficulty on June twenty fourth, 2023.

“AI unlocks large potential for tech firms. Nevertheless, as data scientists and engineers race to deliver new AI options to manufacturing, the large quantities of data they deal with require further safety checks and safeguards,” Wiz CTO & Cofounder Ami Luttwak instructed BleepingComputer.

“This rising expertise requires massive units of data to coach on. With many improvement groups needing to control large quantities of data, share it with their friends or collaborate on public open-source tasks, circumstances like Microsoft’s are more and more arduous to observe and keep away from.”

BleepingComputer additionally reported one 12 months in the past that, in September 2022, risk intelligence agency SOCRadar spotted another misconfigured Azure Blob Storage bucket belonging to Microsoft, containing delicate data saved in information dated from 2017 to August 2022 and linked to over 65,000 entities from 111 international locations.

SOCRadar additionally created a data leak search portal named BlueBleed that permits firms to search out out if their delicate data was uncovered on-line.

Microsoft later added that it believed SOCRadar “significantly exaggerated the scope of this problem” and “the numbers.”

Microsoft leaks 38TB of private data via unsecured Azure storage www.bleepingcomputer.com 2023-09-18 18:31:44

Source link